The B2B Marketer’s Guide to High Value Actions + How To Combine Them With Bombora Intent Data

A practitioner’s field guide to the behavioural signals that fuel modern B2B activation, why scoring beats counting, and the five questions every B2B marketer should ask

The wrong belief most B2B marketers carry

Got asked at an event a few weeks ago, “WTF is a HVA really?”

I’m guilty of using the term as if everyone’s clear on it. Let’s face it, that’s a solid adtech trait in itself, one that predates my 15+ years building solutions in the space. The industry has a habit of adopting acronyms before defining them, then making confused colleagues feel like the slow ones in the room.

So here’s the definition that should have been agreed years ago, properly framed for how B2B actually works.

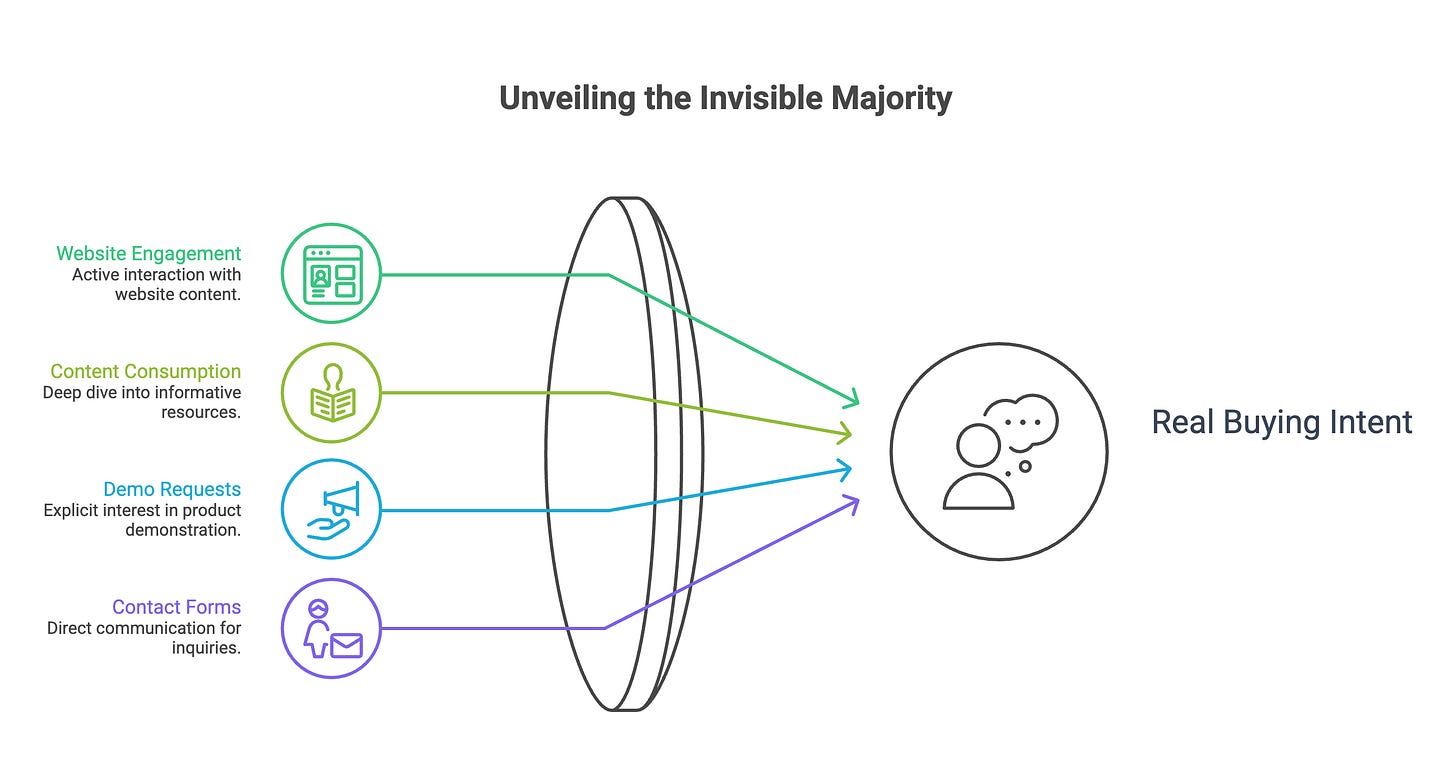

A High Value Action is a behaviour on your site that indicates real buying intent, but is not a conversion in the traditional sense.

It is what happens before the prospect decides to put their hand up. It is the invisible majority of buying activity that most B2B reporting frameworks can’t see, can’t score, and can’t act on.

If you’re running B2B advertising in 2026 and you’re still measuring success in form fills, you’re optimising against the last few percent of the funnel. Everything in front of it is invisible. Most of what actually matters is invisible.

This piece is the field guide I would have wanted on day one in B2B adtech, written for the practitioner who has heard the term, used it confidently in a meeting, and at some point quietly realised they couldn’t define it precisely.

This is the second in a sixteen-week series unpacking the B2B programmatic stack, the way it’s actually operated by people who run it. Not the version vendors pitch.

What you’ll learn in this newsletter

Why the traditional conversion model is structurally broken for B2B, and what a High Value Action actually is when properly defined

The pressure-sensor framework for thinking about HVAs, how individual signals cluster into account-level momentum, and why a single visit is noise but four across three people is signal

The full taxonomy of HVAs B2B teams should be capturing, organised by intent type, and the obvious ones most stacks miss

How HVAs combine with third-party intent providers like Bombora, 6sense and TechTarget to produce a complete in-market picture, rather than competing with them

The account-stitching problem, why GA4 alone can’t solve it, and what it takes to read a buying committee’s behaviour as a coordinated signal

Five diagnostic questions to audit your own HVA architecture, and what most B2B teams will discover when they answer them honestly

I was talking about this on LinkedIn this week

The B2B conversion problem (skip if you’ve felt this for years)

If you’ve run B2B marketing for any length of time, you’ve watched the same scene play out: a six-figure deal closes, the SDR team gets the credit, the marketing attribution report shows the source as “direct” or “unknown inbound,” and nobody can really explain how the account moved from cold to ready.

This is not because attribution is broken in B2B, though it is. It’s because the entire conversion measurement framework that consumer marketing taught us to use was built for a different problem.

In B2C, the conversion event almost always happens on the website. Someone sees an ad, lands on a product page, adds to basket, checks out, done. The transaction is the conversion. The site is the funnel. The funnel is measurable. Click attribution mostly holds up because the buyer is a single person making a single decision in a single session, often within the same hour.

B2B doesn’t work like any of that.

The decision-maker rarely buys on the website. The buying committee rarely visits as a single coordinated group. The sales cycle rarely fits inside a single browser session. The final commit usually happens in a contract sent over email, sometimes negotiated for weeks, often involving procurement, legal, security review, and a champion internally building the case across departments that never touch the website at all.

The website is a research environment. It is where the buying committee learns about you, evaluates you, and works out whether you can survive their procurement process. The decision happens elsewhere.

This is the structural problem the form-fill conversion model can’t solve. When the actual buying event happens outside the measurement environment, every conversion-led metric becomes a proxy. And most proxies are weak.

Lead form fills are a proxy for interest, and a weak one. They capture the moment a prospect decided to identify themselves, which is almost always after they’ve already decided you’re worth a conversation. Demo bookings are a proxy for serious consideration, but they sit even further down the funnel, often after months of unobserved evaluation. Webinar registrations capture topic interest, but they don’t capture vendor interest. None of these are buying intent. They are commitments to a conversation, made after the buying intent has already formed.

What B2B teams actually need is a way to read intent before the commitment to a conversation is made. That’s where High Value Actions step in.

What an HVA actually is, properly defined

Strip the jargon and a High Value Action is a behavioural signal that captures the cost a prospect is willing to pay, in time and attention, to evaluate you before they’re ready to engage.

The cost matters. That’s what separates an HVA from any other page view.

A homepage visit costs the visitor almost nothing. They might have arrived by accident, clicked a paid ad without conscious intent, or be a competitor doing reconnaissance. There’s no way to score that single event meaningfully.

I like to use a mental ‘cost’ model, putting myself into the decision makers shoes.

A return visit to the pricing page costs more. The visitor has navigated back, deliberately, to a piece of information that matters. A side-by-side comparison page read costs more still. They are now in active evaluation. A security and compliance documentation download requires that the visitor has progressed in their internal stakeholder management to the point where IT or procurement is asking questions they need answers for.

Each of these behaviours has a price the visitor paid to perform it. That price is what makes the behaviour a signal rather than a noise event.

I think of HVAs like pressure sensors. A single sensor firing tells you almost nothing. A cluster of sensors firing in the same area, within a short time window, from devices that resolve back to the same organisation, tells you something specific is happening. That cluster is the signal. The individual readings are the components.

The job of the HVA framework is two-fold: define the behaviours that count, and build the scoring system that turns clustered behaviour into actionable account-level intelligence.

Obvious enough examples could be form fills, demos booked or a webinar registration. But those are the moments where the prospect has decided to talk to you. By the time those events fire, the real buying activity has already happened. The HVA framework lives upstream of that, or at least it does if you want to derive maximal value form it, and leap ahead of competitors who are in the same buying cycle as you.

The HVAs worth capturing are everything that happens before the commitment to a conversation:

Repeat pricing page visits, especially across different sessions and different people resolving to the same account

Comparison page reads, particularly the bottom-of-funnel “vs competitor” content

Security, compliance and procurement documentation downloads

API documentation engagement, which signals technical evaluation by an engineering buyer

Customer story and case study reads, especially when the prospect’s industry matches the story

Repeat returns to the product or platform overview pages

Multiple people from the same domain landing in the same week

Engagement with soft-gated content (no form, but tracks reading depth and dwell), particularly long-form analyst-style material

This is the buying committee researching you, costing themselves time and attention to do it, but not yet ready to put their hand up.

Most B2B teams treat these events as ambient website data. Useful, perhaps, for understanding traffic patterns. Not useful for measuring intent. That’s the inversion most stacks need to fix. These events are the intent signal. Form fills are the post-intent commitment to engagement.

In our experience working across B2B advertisers, somewhere around 95% of accounts seriously evaluating a vendor won’t fill in a form before the buying decision is materially formed. They look, lurk, share internally, and either go quiet or surface six weeks later with a decision already three-quarters made. If you’re measuring conversions only, you’re seeing the last few percent of the funnel. The HVA framework is how you make the rest of it visible.

The taxonomy: organising HVAs into a scoring framework

Defining HVAs is the easy part. Organising them into a scoring framework that activates against ad spend is where most teams get lost.

The framework needs to do three things: classify the behaviour by the type of intent it signals, weight the behaviour by the cost the visitor paid to perform it, and resolve the behaviour back to an account-level identity. Let’s take each in turn.

Classification by intent type. Different HVAs signal different things. A pricing page visit is a commercial qualification signal: the prospect is checking whether you fit their budget. An API documentation read is a technical fit signal: an engineer is checking whether you’ll integrate. A security documentation download is a procurement readiness signal: the prospect is moving you toward formal evaluation. A comparison page read is a competitive signal: they’re benchmarking you against alternatives. These are not interchangeable. A high score driven by commercial signals tells a different story than a high score driven by technical signals, and both deserve different sales follow-up.

Weighting by cost paid. Not all behaviours are equal. A single pricing page visit might score one point. A return visit within seven days scores three. A pricing page visit followed by a customer story read in the same session scores five. The progression matters more than the count. The weighting should reward depth of evaluation, not breadth of curiosity.

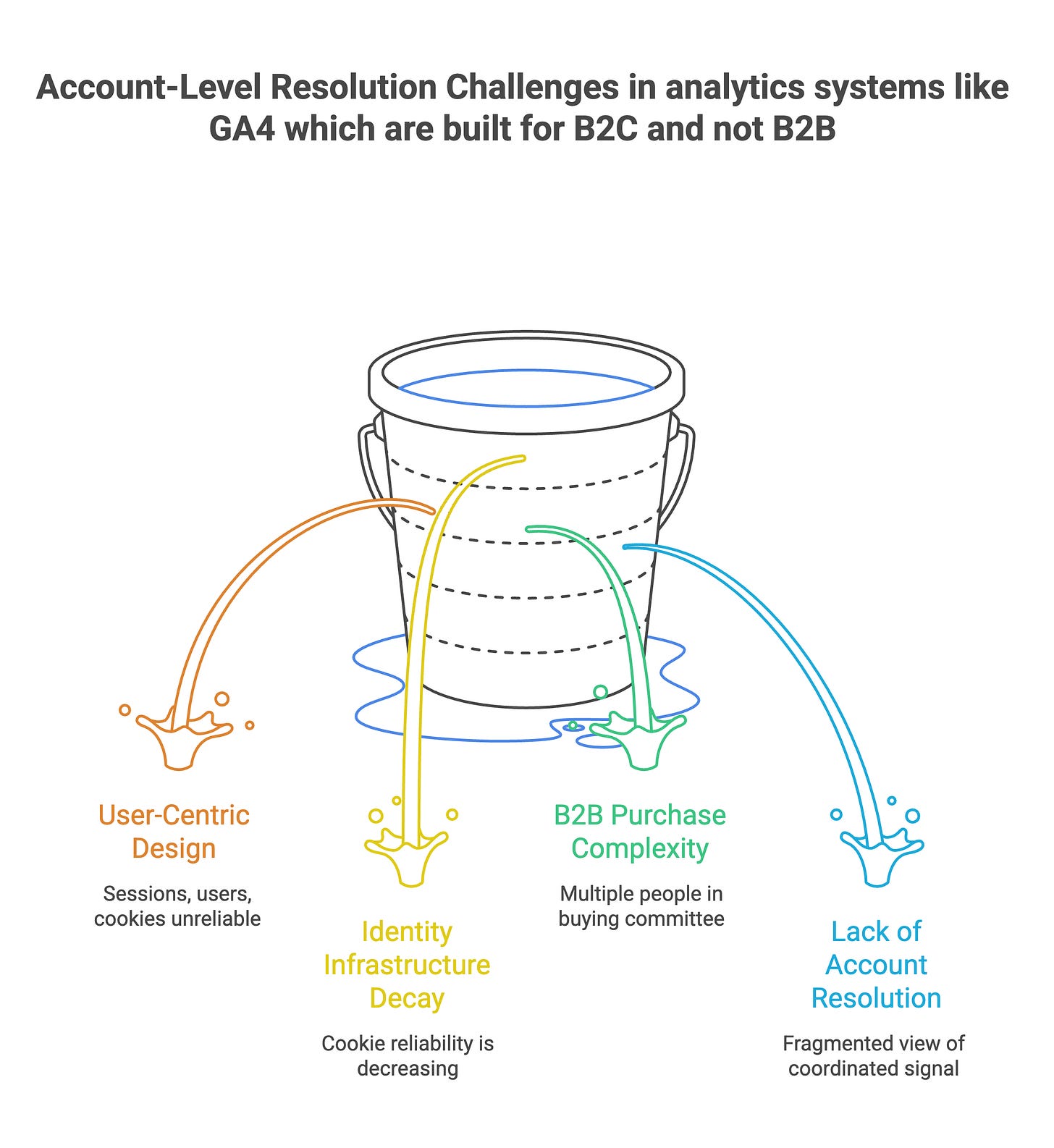

Account-level resolution. This is the part that breaks most stacks. Browser-level analytics tools like GA4 are user-centric by design. Each session is a user, each user is a cookie, and the cookie is increasingly unreliable as identity infrastructure decays. To make HVAs work, you need to resolve sessions back to organisations using IP-to-business graphs, reverse-DNS lookups, identity graph providers, or first-party login data where you have it. With six to ten people per buying committee on a typical enterprise B2B purchase (a figure Gartner has cited consistently for years), without account-level resolution you are reading a fragmented version of a coordinated signal. The committee is doing one thing. Your analytics layer is showing six unrelated things.

When all three layers work together, the HVA score becomes a meaningful operating metric. A score that crosses a threshold is a buying signal. A score that has crossed and held is a momentum signal. A score that has crossed, held, and broadened to new contacts within the same account is the kind of signal you want sales tracking actively, and the kind of signal you want your paid media leaning into.

This is where the language of “lead scoring” most teams already have falls short. Most lead scoring systems score individuals, not accounts. They score known contacts, not anonymous visitors. They score against demographic and firmographic fit, not behavioural change. HVA scoring is account-first, behaviour-first, and change-state aware. It’s a different operating model.

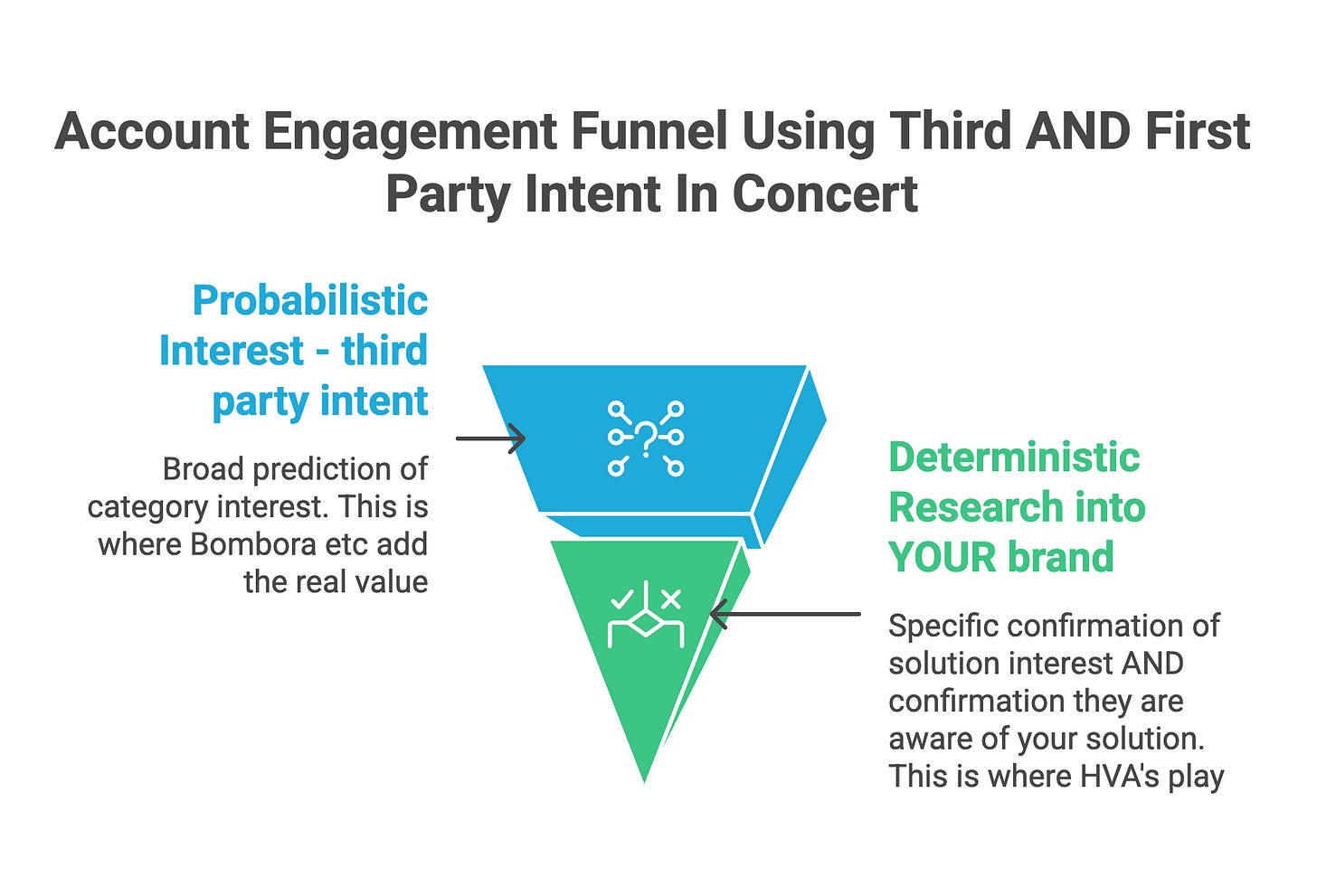

How HVAs combine with third-party intent (not against)

A common misread, when teams first encounter HVAs, is to position them in opposition to third-party intent data. The argument runs: third-party intent is bought, generic, probabilistic, and increasingly commoditised. HVAs are first-party, deterministic, and proprietary. So drop the third-party tools.

This is wrong. Or at least, it’s a shortcut that misses what third-party intent does well.

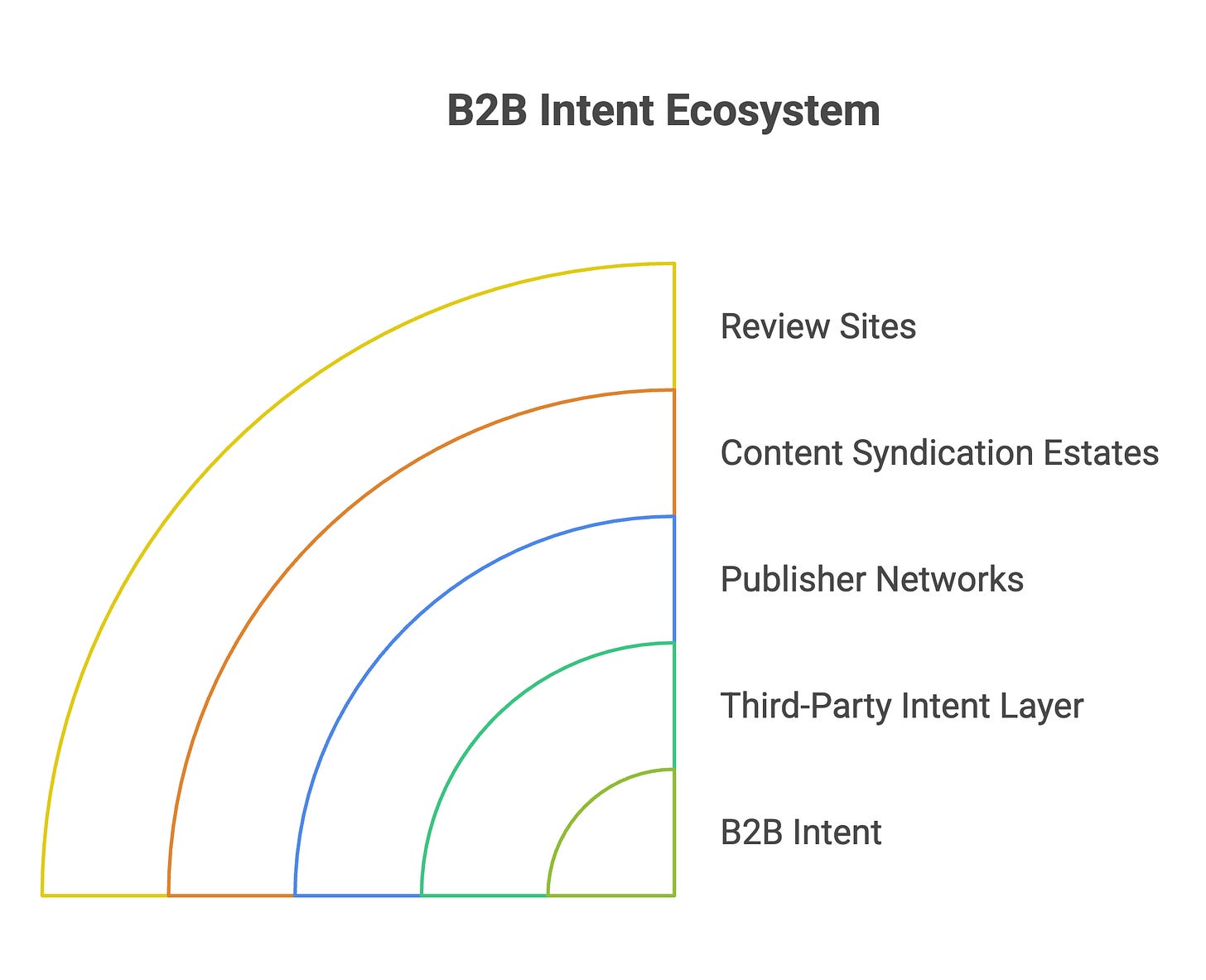

Bombora, 6sense, TechTarget and the wider B2B intent ecosystem all work by aggregating consumption signals across publisher networks, content syndication estates, review sites, and B2B-specific data sources. This is scale and depth of signal casting that no first party data stack can. match, so do not rush to discount the value of it. When an account is researching a topic across the open web, before they ever land on your site, that activity is captured in the third-party intent layer. By the time they show up to your domain, they have already done weeks of category-level research that you had no direct visibility into.

That category-level research is what third-party intent tells you. It tells you the account is in-market. It tells you what topics they care about. It tells you whether the surge in interest is new or sustained. It tells you, in aggregate, that something is happening at the organisation. None of it is relevant to JUST YOU

What it doesn’t tell you is whether they have started researching you specifically. That’s what HVAs tell you. The handoff is precise. Are. you on this accounts radar or not? this is the question that HVA’s should be tasked with answering

Third-party intent is the upstream layer: probabilistic, broad, predictive. It tells you the account is in-market for your category.

HVAs are the downstream layer: deterministic, narrow, confirmatory. They tell you the account is now researching your solution.

Stack them together and you get a complete in-market picture. An account that’s surging on Bombora topics relevant to your category, that hasn’t yet visited your site, is a top-of-funnel paid media target. The same account, now firing HVAs on your pricing page, is a mid-funnel acceleration target. The same account, with HVAs broadening across six contacts in a week, is a sales-ready notification.

Neither layer substitutes for the other. Teams that drop third-party intent because they’ve discovered HVAs lose the early-warning system. Teams that lean only on third-party intent miss the confirmation that the account is now researching them specifically, not just the category.

The teams winning at this in 2026 use both, with deliberate handoff logic between them.

The account-stitching problem most B2B teams won’t talk about

Here is the part where most B2B marketing stacks fall over, while everyone pretends the data is working.

Capturing HVAs at the individual user level is straightforward. Most analytics platforms can track pricing visits, document downloads, repeat engagement. You can build a basic score in an afternoon. What most stacks cannot do, even after years of investment, is stitch those individual behaviours back to a coherent account-level picture. This is the million dollar figurative unlock, the game-changer which lifts a nice data point and weaponises it

The reasons are infrastructural, and they’re worth naming clearly. You cannot Claude Code this in an afternoon because you won’t get the signal out of GA that you need to execute

GA4 is user-centric. The entire data model is built around user IDs, sessions, and events. There is no native “account” concept. To get account-level views, you need to enrich the user identity with firmographic data, and most teams either don’t do this, or do it in batch processing that creates a delay between the behaviour and the actionable intelligence.

Cookie-based identity is degrading. Safari blocks third-party cookies by default. Firefox does the same. Chrome’s Privacy Sandbox has been pushed back repeatedly but the direction of travel is one-way. First-party cookies are more resilient but still get cleared, blocked, and reset. By the time a buying committee has engaged across two months and six devices, the cookie identity layer has fragmented several times.

Identity resolution providers help, but they’re partial. The best deterministic identity graphs cover maybe sixty to seventy percent of business traffic. The rest is probabilistic, which means the account stitching is inferred, not confirmed. That’s fine for analytics and reporting, but it’s a different conversation when you’re activating against the data for paid media bidding or sales notifications.

Buying committees compound the problem. When six to ten people from the same company touch your site across a six-week buying cycle, they will use multiple devices, multiple browsers, sometimes different VPNs, sometimes their home networks. Without organisational stitching, you see fragments. Person A in the morning on the office network. Person B in the afternoon from home. Person C on a phone, on cellular, three weeks later. To you, that’s three unrelated visitors. To the buying committee, that’s one coordinated evaluation effort.

This is the gap where activation breaks down. The HVAs are firing. The data is being logged. Nobody is reading the firing pattern as a coordinated buying signal because the analytics layer cannot stitch the individual events to the account that’s actually doing the buying.

The fix is structural, not tactical. It requires an identity resolution layer that runs at the visit, not in batch. It requires the firmographic enrichment to be live, not nightly. It requires the activation layer, whether that’s sales notifications or paid media bidding, to consume account-level signal rather than user-level signal. And it requires the reporting layer to surface account-level momentum, not user-level page views.

Without this stitching, HVAs are a useful concept that produces a slightly enriched analytics report. With it, HVAs become the operating layer of B2B paid media.

Activating HVAs in advertising, and the dark funnel link

Once HVAs are scored, stitched to accounts, and surfacing in real time, the question becomes what to do with them.

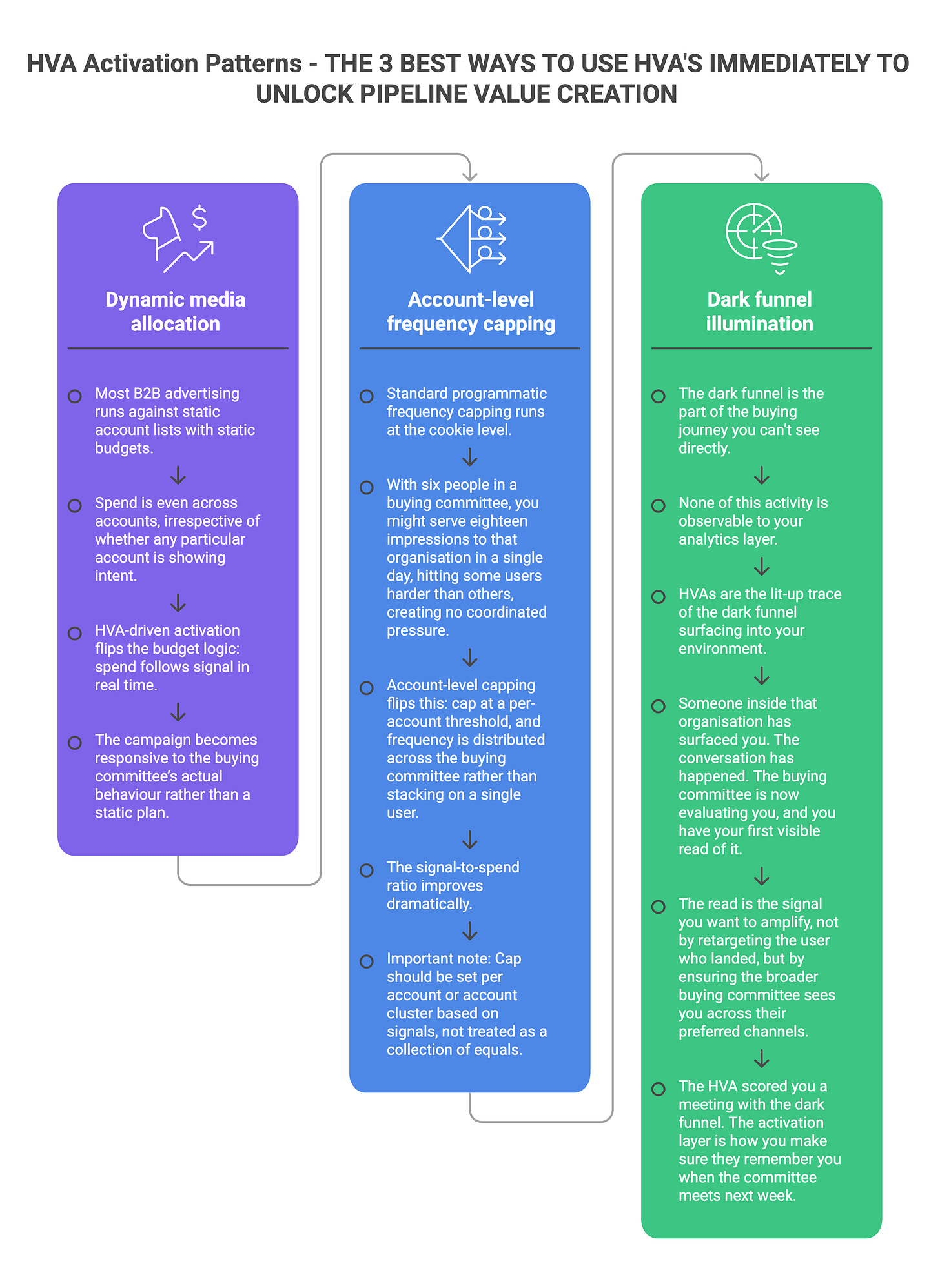

Three activation patterns matter, and each requires the upstream HVA architecture to be in place to work at all.

Dynamic media allocation. Most B2B advertising runs against static account lists with static budgets. The marketing team uploads a target account list to the DSP, sets a budget, and the campaign runs until the budget is spent. Spend is even across accounts, irrespective of whether any particular account is showing intent. This is fundamentally inefficient. Accounts that are surging deserve more spend, not less. Accounts that are silent deserve less, not the same. HVA-driven activation flips the budget logic: spend follows signal in real time. An account crossing an HVA scoring threshold gets a budget increase, more impressions, more frequency. An account dropping below a threshold gets budget pulled. The campaign becomes responsive to the buying committee’s actual behaviour rather than a static plan.

Account-level frequency capping. Standard programmatic frequency capping runs at the cookie level. If you cap at three impressions per user per day, that’s three impressions for the user, not three for the account. With six people in a buying committee, you might serve eighteen impressions to that organisation in a single day, hitting some users harder than others, creating no coordinated pressure. Account-level capping flips this: cap at a per-account threshold, and frequency is distributed across the buying committee rather than stacking on a single user. The signal-to-spend ratio improves dramatically.

One point to note; a fast read makes this sound like you should cap all accounts equally, like programmatic doctrine works on users. However you are not here engineering account level first and third party intent collusion to then abstractly treat all accounts as equals. The game here is to use this data to inform a cap, but the cap should be set per account or account cluster based on the signals we’re weaponising. A small but significant point. Treating a TAL as a collection of equals is one of the biggest single flaws I see everyday

Dark funnel illumination. This is the use case that often gets overlooked, and is arguably the most strategically interesting.

The dark funnel is the part of the buying journey you can’t see directly. Slack conversations between buyers and their peers. Reddit threads and LinkedIn DMs about your product. Conversations at industry events. Internal champions building the case for you in meetings you never attend. None of this activity is observable to your analytics layer.

HVAs are the lit-up trace of the dark funnel surfacing into your environment. When an account that has had no observable interaction suddenly starts firing HVAs across three or four people in a week, the dark funnel is working. Someone inside that organisation has surfaced you. The conversation has happened. The buying committee is now evaluating you, and you have your first visible read of it.

That read is the signal you want to amplify. Not by retargeting the user who landed, but by ensuring the broader buying committee sees you across their preferred channels. CTV in the evening, audio on the commute, native in the trade publications they read. The HVA scored you a meeting with the dark funnel. The activation layer is how you make sure they remember you when the committee meets next week.

The five questions to ask about your own HVA stack

These are the questions I work through when auditing a B2B marketing stack. They will surface most of what’s broken, fast.

1. What HVAs are you capturing, and how are they classified? Can you list, by name, the specific behaviours you score as HVAs? Are they classified by intent type (commercial, technical, procurement, competitive)? If the answer is “we track pricing page visits and demo requests,” you have two signals, not an HVA framework. You should have ten or more distinct behaviours, organised into categories, with weights attached.

2. Is your scoring account-level, or user-level? When the score crosses a threshold, are you alerted to the account, or to a single user identifier? If it’s user-level, you’re missing the buying-committee signal entirely. A score of seventy from one user is interesting. A combined score of forty across four users in the same account is more interesting. The framework should always surface the latter.

3. What’s your identity stitching coverage, and is it deterministic or probabilistic? What percentage of your site traffic resolves to an organisation, and of that, what percentage is matched deterministically versus inferred? If you don’t know the answer, ask your analytics or RevOps team. Industry-typical for un-augmented B2B sites is somewhere between thirty and fifty percent firmographic match coverage. Augmenting with an identity resolution provider can push that to seventy or eighty, but the gap matters for what you can activate against.

4. Is the HVA signal flowing into ad activation in real time, or in batch? When an account crosses an HVA threshold, how quickly does that signal reach the DSP, the LinkedIn campaign manager, the CTV buy? If it’s a weekly export from analytics, you’ve already lost the moment. If it’s real-time via an integration or audience pipeline, you can act on the buying-committee surge while it’s still active. Real-time changes what HVAs are worth.

5. Are you measuring HVA impact on pipeline, or just on traffic? The HVA scoring framework needs to be tied back to pipeline outcomes to demonstrate value. Which HVAs, in which combinations, most strongly predict opportunity creation? Which scoring thresholds best correlate with sales engagement? Without this measurement loop, you are running a sophisticated behaviour-tracking system that doesn’t tell you whether it’s working. With it, you can refine the scoring weights, prune the noisy HVAs, and tune the framework to your actual buying cycle.

If you ask these five questions and your team can’t answer them cleanly, you have a stack problem. Not a strategy problem. Not a budget problem. A stack problem, fixable in weeks rather than quarters, once the diagnostic is honest.

Where this is heading

Three shifts are reshaping how HVAs operate in B2B paid media.

HVA scoring is becoming the default unit of B2B account targeting. The shift from static account lists to dynamic, signal-fuelled account targeting is well under way at the more sophisticated B2B marketing teams. Over the next two years this becomes the default. Static account lists will feel as outdated as buying audience segments by demographic.

Identity resolution is consolidating into a few dominant graphs. The B2B identity layer has been fragmented for years, with each vendor maintaining its own graph and each integration creating its own translation layer. The market is consolidating, with a handful of identity providers becoming the de facto graphs. This makes account-level HVA stitching cheaper and more reliable, but also more concentrated, with attendant risks for any vendor that ends up locked into a single graph.

The dark funnel layer is becoming addressable. HVAs combined with the right identity infrastructure are increasingly able to surface dark funnel activity into the activation layer. Not perfectly. Not for every account. But the trajectory is clear: the gap between what the buying committee is actually doing and what the B2B marketing team can see is closing.

The B2B marketing teams that operate against this trajectory will pull away from the ones still measuring success in form fills. The gap is widening, even if the budgets look similar.

The takeaway

A High Value Action is not a website analytics event. It’s the structural alternative to a conversion measurement framework that doesn’t fit B2B’s reality. It’s how you read buying committee intent before the buying committee is ready to identify itself.

If you’re running B2B marketing in 2026 and you cannot articulate which HVAs you’re capturing, how you score them, how you stitch them to accounts, and how you activate against them, you are operating with the wrong tooling. The good news is the diagnosis is cheap, and the fix is mostly infrastructural rather than strategic.

The five questions above are the fastest route to honesty. If you can’t answer them cleanly, that’s your roadmap.

Next in this series: the dark funnel playbook, how to activate B2B paid media against the buying activity you can’t directly observe, and what changes when the activation layer learns to read what the analytics layer can’t see.

The B2B Stack is written by Mike Harty, founder of FunnelFuel, a B2B-native programmatic managed service operating across London, New York and Singapore. If you’re auditing your own HVA architecture and want a second pair of eyes, reply to this email and we can compare notes. Want to connect with me, book a 30 minute meeting https://calendar.app.google/PDZ36wk1Yci7PuDf6 here